Blog Highlights

- Operational breakdowns often go unnoticed until teams start compensating manually, and task automation is usually introduced as a reaction rather than a design choice

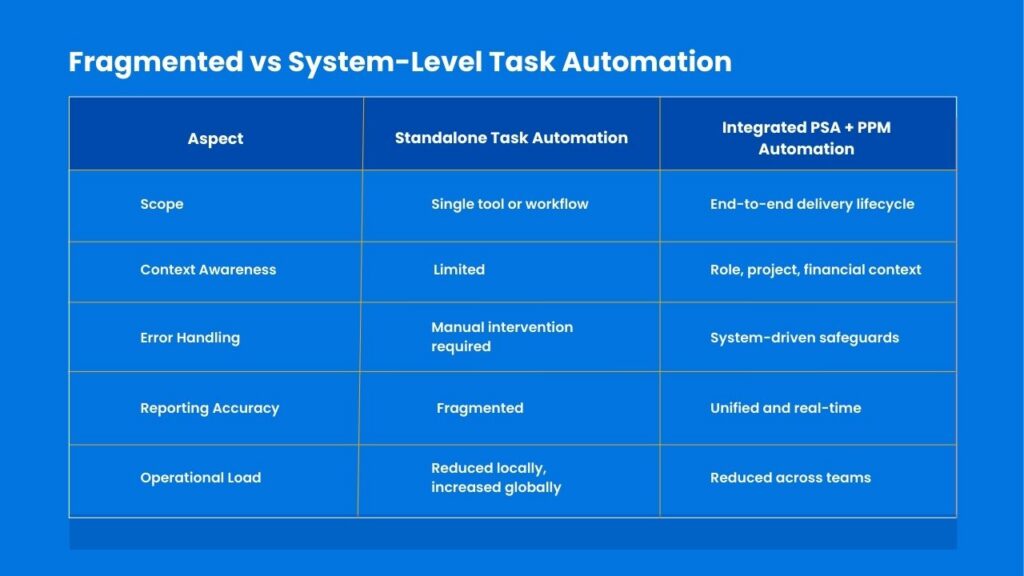

- Standalone task automation tools solve isolated problems but create supervision overhead by ignoring delivery, resource, and financial context

- True task automation depends on orchestration, placing work correctly across dependencies instead of merely triggering actions

- Effective task automation requires mapping operational dependencies, embedding decision logic, centralizing rules, handling exceptions, and measuring human intervention

- Time saved per task is a misleading automation metric; system reliability, forecast accuracy, and reduced manual reconciliation matter more

- Integrated PSA + PPM platforms enable preventative, context-aware task automation that scales without shifting complexity back onto people

Most operational breakdowns accumulate silently. A missed handoff between teams.

A task updated in one system but not another. A project that looks healthy on paper while delivery teams are compensating manually behind the scenes.

None of these feel like failures in isolation. Over time, they become patterns. Patterns become norms. Norms become accepted friction.

Task automation is often introduced at this stage as a reaction. A script here. A tool there. A workflow patched together to fix one visible delay. What rarely gets questioned is whether those fixes are reducing work or redistributing it.

This is where many organizations unknowingly create more operational drag while believing they are becoming more efficient.

What Task Automation Actually Means in Real Operations

Task automation is frequently misunderstood as a productivity shortcut. In practice, it is an architectural decision.

At its core, task automation is the systematic removal of manual effort from repeatable operational actions. This includes:

- Task creation triggered by events

- Status updates based on real progress

- Resource allocation adjustments

- Approvals and escalations

- Notifications tied to dependency changes

The critical detail is not the action being automated. It is the context in which that action exists.

Automating a task without understanding its upstream and downstream dependencies is not automation. It is delegation to software without governance. This distinction is where most task automation tools fall short.

The Hidden Architecture of Modern Work

Every IT and services-driven organization operates on an invisible framework:

- Projects are broken into tasks

- Tasks depend on roles, availability, and approvals

- Time tracking feeds billing and forecasting

- Changes ripple across delivery, finance, and reporting

When these elements are managed in isolation, teams rely on human intervention to keep the system coherent.

- Manual follow-ups.

- Cross-checking dashboards.

- Reconciling mismatched data during reviews.

Automation should remove this cognitive overhead. Instead, many setups amplify it.

Why Standalone Task Automation Tools Create More Work

Standalone task automation tools are designed to solve narrow problems well. That is also their limitation.

They automate actions inside their own boundary, not across operational reality. Common outcomes include:

- Tasks auto-created without resource availability checks

- Status changes that do not reflect billing readiness

- Notifications sent without understanding priority context

- Automation rules that conflict across tools

What emerges is a new layer of work: supervision. Teams now spend time validating automation output, correcting misfires, and explaining discrepancies during audits and reviews. Automation has occurred. Efficiency has not.

The Real Problem

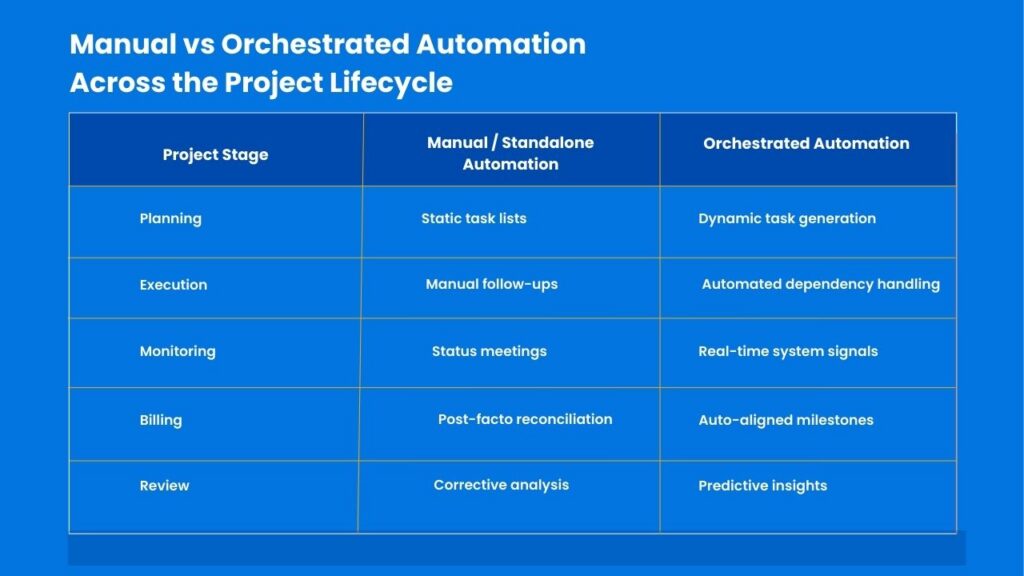

Most tasks are not complex to execute. They are complex to place correctly. A task assigned too early creates idle time. Assigned too late, it becomes a blocker. Assigned without financial context, it disrupts forecasting.

Task automation fails when it focuses only on execution triggers and ignores orchestration logic. Orchestration answers questions like:

- Who should act now, and who should wait

- What changes if this task is delayed

- How does this impact cost, margin, or utilization

Without orchestration, automation becomes noise.

How to Automate Tasks Without Breaking Workflows

Automation that actually reduces effort follows a disciplined sequence.

1. Map operational dependencies first

Understand how tasks affect delivery, billing, and reporting before automating them. This prevents automation from triggering work that the system is not operationally ready to support.

2. Automate decisions, not just actions

If a task is created, the system should already know priority, owner, and impact. Without embedded decision logic, automation only shifts judgment back to people.

3. Centralize logic

Automation rules should live in one system, not across disconnected tools. Distributed logic creates conflicts that teams end up resolving manually.

4. Treat exceptions as first-class scenarios

Automation must anticipate variance, not collapse when reality deviates. Most operational effort comes from edge cases, not the happy path.

5. Measure human intervention

If people are still stepping in frequently, automation is incomplete. The true cost of automation is revealed in how often humans need to override it.

Where IT Leaders Misjudge Automation ROI

The most common metric used to justify task automation is time saved. Time is a misleading proxy. More accurate indicators include:

- Reduction in cross-team clarification

- Fewer project review corrections

- Improved forecast reliability

- Lower dependency-related delays

- Reduced need for manual reconciliation

Standalone task automation tools often score well on time saved per task. They score poorly on system reliability. This gap is rarely surfaced in vendor demos. It shows up months later during delivery reviews.

Why Task Automation Breaks at Scale, Not at the Start

Task automation rarely fails during early adoption. In pilot phases, workflows are predictable, teams are small, and exceptions are limited. Automation rules appear reliable because the system is operating under ideal conditions.

The pressure comes later. As project volume increases, teams work in parallel, priorities shift more frequently, and dependencies multiply. Automation rules that once felt sufficient start to collide. Tasks trigger out of sequence. Exceptions become common rather than occasional. What was manageable through light oversight now requires constant intervention.

This is where standalone task automation tools begin to show strain. Their rules are static, their context is narrow, and their ability to adapt is limited. Each new edge case adds another rule, another override, another manual correction.

Organizations often misread this moment as a tooling gap or a training issue. In reality, it is an architectural limit. Automation that is not designed to scale with operational complexity will always break under real load.

Sustainable task automation is not proven in pilots. It is proven when the system continues to make correct decisions as complexity increases.

Explore How Integrated Task Automation Changes Operational Outcomes.

Task Automation in PSA + PPM Environments

In PSA + PPM systems, task automation behaves differently because the system understands the full delivery context. Automation can:

- Create tasks based on project phase transitions

- Adjust assignments based on utilization thresholds

- Trigger approvals tied to financial exposure

- Update forecasts as work progresses

- Align delivery actions with invoicing readiness

Here, automation is not reactive. It is preventative. Problems are resolved before they surface because the system recognizes patterns humans would catch too late.

What Mature Task Automation Tools Look Like

Mature task automation tools are defined by how well they understand the system they operate in, not by how many actions they automate. They share a few core traits:

- Native integration with project, resource, and finance data: Automation runs with full awareness of delivery timelines, team capacity, and financial impact.

- Rule engines that adapt to workload changes: Automation adjusts as priorities shift, demand fluctuates, and teams rebalance.

- Visibility across delivery and leadership layers: Teams see what to do next, while leaders see the operational impact behind it.

- Auditability without manual explanation: Every automated action is traceable without follow-ups or offline reconciliation.

- Automation logic that evolves with scale: Rules remain stable and predictable as project volume and complexity grow.

These are not just features but also design principles. Any tool lacking them will eventually shift complexity back onto people.

Takeaway

Task automation is not about speed. It is about coherence. Organizations that automate tasks without rethinking system design end up supervising machines instead of empowering teams.

Those that treat automation as part of their operational architecture create environments where work flows with fewer interventions, fewer surprises, and fewer late-stage corrections. The difference is rarely visible on a feature checklist. It is felt in delivery confidence..

About Kytes

Kytes is an AI-enabled [PSA + PPM] platform built for organizations that outgrow fragmented project tools. By unifying project management, resource planning, financial tracking, and intelligent task automation, Kytes eliminates the need for disconnected automation layers.

Automation within Kytes is context-aware, system-driven, and designed to scale with operational complexity rather than add to it. This is task automation designed as infrastructure, not a workaround. If your teams are managing automation instead of benefiting from it, it is time to rethink the system behind your tasks. Discover how Kytes approaches task automation at an architectural level.